Minneapolis recently made history by becoming the first city to ban its police department from using facial recognition software. While this decision is a step in the right direction for civil liberties, it isn’t enough. It’s time for us to take a hard look at why facial recognition software poses such a threat and how we can protect ourselves from potential misuse.

The Threat of Facial Recognition Software

Facial recognition software has become increasingly pervasive and sophisticated in recent years, with major companies such as Apple and Amazon spending significant resources on developing and deploying the technology for large-scale use. While the software offers many benefits in terms of convenience, security, and efficiency, it has also raised concerns about civil liberties violations. In this paper, we will explore what facial recognition software is, how it works, the potential threats it poses to civil liberties, and measures that can be taken to protect our rights while still reaping the benefits of the technology.

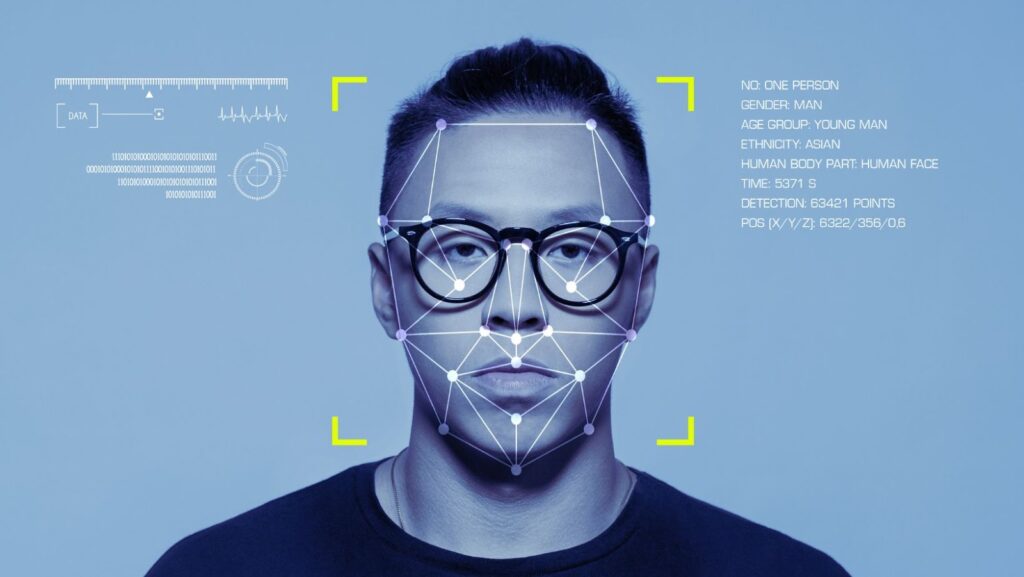

Facial recognition software is a form of artificial intelligence (AI) that uses advanced algorithms to recognize faces in digital images or video frames. It can be used for a variety of tasks, such as authenticating users on digital applications or devices; recognizing faces in photos or videos within police investigations; or even scanning crowds for potential suspects. To accomplish these tasks effectively, facial recognition software leverages a combination of biometrics – elements such as a person’s facial shape or skin tone – combined with data from a reference image database. This allows it to recognize individuals with high accuracy over long distances and under various lighting conditions.

Minneapolis Bans its Police Department From Using Facial Recognition Software

In June of 2020, Minneapolis become the first city in the United States to ban the use of facial recognition software (FRS) by government agencies in their community. At the time, Minneapolis Mayor Jacob Frey made a statement about how FRS is a threat to civil liberties. The decision was based on research on FRS conducted by the University of Minnesota and its ability to accurately identify members of certain races and genders. Research showed that when algorithms were attempted to discern gender, they misidentified individuals with darker skin up to 35% of the time—most often misidentifying individuals as male when they identify as female.

The research also showed that African Americans were more prone to false positive matches—being identified as someone who they are not. The resolution introduced by Minneapolis took a strong stance against this technology and argued that it was incompatible with privacy expectations and civil liberty standards in America. In passing this resolution, Minneapolis is leading the way for other cities across the country in restricting FRS use within their jurisdictions. It is believed that such ethical regulations provides safeguards on civil liberties, while allowing society can continue technological advancements without violating personal rights and freedoms.

The Impact of Facial Recognition on Privacy

Facial recognition software is an increasingly popular technology used by governments and businesses, yet the potential ramifications to civil liberties are extremely concerning. This type of system is capable of recognizing individuals in real time and matching them to a database of stored images—such as drivers’ license photos, passport databases, or social media profiles. The impact of facial recognition on privacy is profound. As governments adopt wide-scale facial recognition systems, individuals are at an increased risk of invasion of privacy rights, particularly if these systems are unmanned or require no explicit consent for use.

Furthermore, laws covering storage and usage of personal data have been significantly lagging behind the implementation and usage of facial recognition technology—leaving many with insufficient protection from the misuse of their personal data. There has also been particular focus on ensuring that facial recognition technology does not lead to discrimination or profiling based on race, gender, or other protected characteristics. AI-based facial recognition algorithms have come under scrutiny recently due to reports that there is a racial bias in how they identify faces, leading to significant civil liberties concerns over ethnic profiling and false arrests.

Alternatives to Facial Recognition Software

As facial recognition technology continues to be adopted more broadly, it’s important to identify possible alternatives that can help ensure the protection of civil liberties without sacrificing the security and efficiency benefits of facial recognition software. The following are some potential alternatives to consider:

1. Biometric Identification Through Wearables: Wearable technologies like smartwatches and rings offer an alternative to traditional facial recognition technology. These wearables typically use Bio-NFC (Near Field Communication), which uses a self-scanning system that reads biometric data from the wearer’s body such as fingerprints, iris scan, or vocal patterns. This technology can then be used in authentication processes for identification purposes.

2. Key Card Access: Key card access is an existing method to secure premises that is already widely utilized – notably in airports and office buildings around the world – and provides an effective alternative to facial recognition software by using a card or other token containing encoded information (electronic identification) instead of physical features. This method requires no personal data disclosure and allows for automated access control functionality with comprehensive audit trails.

3. Behavioral Analysis: People may also consider investing in systems utilizing behavioral analysis as an alternative method of authentication, In this type of platform, user behavior is recognized via background noise sampling or keystroke dynamics, which measures how quickly and accurately someone types on a keyboard for example, algorithmically cloned signature, handwriting evaluation machine learning algorithms etc).

This type of system generates complex biometrics from users’ behavioral patterns without any physical contact between the user and the computer—all without invading their privacy too much – providing more security than traditional methods based on passwords that may be shared with third parties or guessed easily by hackers would provide.

Balancing Civil Liberties and Security

It is clear that facial recognition technology offers an unprecedented level of surveillance and profiling – if unchecked, it is a direct threat to the privacy and freedom of citizens. Recent years have seen the technology growing in power and complexity, with use cases ranging from law enforcement to commercial applications. As such, its potential for misuse is huge and civil society demands a control on its usage.

In order to ensure that facial recognition technology does not undermine civil liberties, governments must enact strong regulatory principles which consider questions of privacy, data security and autonomy. In addition, there must be independent oversight and regulation of facial recognition technologies to prevent its misuse by companies or governmental entities. Finally, robust public engagement in this process is essential; only through collective decision-making between governments and citizens can a balance be found between convenience, security and rights protection.

tags = minneapolis police department, facial recognition software ban, city ordinance, clearview ai, federal law enforcement agency, minneapolis clearview aihatmakertechcrunch, minneapolis council aihatmakertechcrunch, minneapolis city council clearview aihatmakertechcrunch, ai-powered face recognition system, facial recognition app restricted, clearview ai facial recognition